Concise and insightfull SEO tutorial

Surprisingly, there’s only a little you need to know to understand how SEO works. In this article you'll find just enough information to design an SEO strategy that’s going to be a meaningful benefit to your business.

Table of contents

- How to find out if SEO is a good channel for your business?

- How does Google ranking algorithm work?

- What do you need to know about links?

- How to create a killing content and get Google to like it

- How does user experience affect your SEO efforts?

- Black Hat SEO methods and why you should avoid them

If you prefer watching over reading.

This article is also available as a YouTube video

SEO Potential

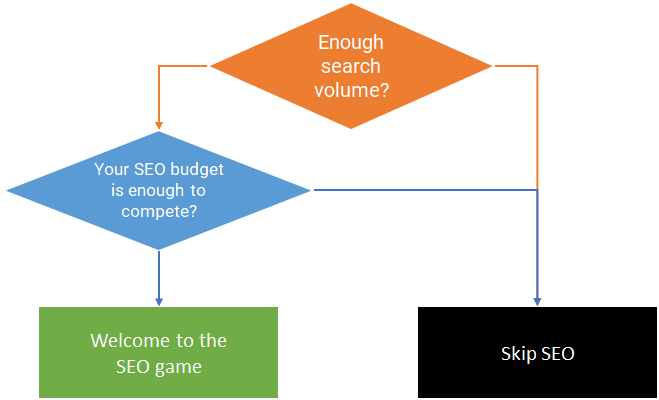

First, let’s figure out if your business has a meaningful SEO potential. Some businesses are better off skipping SEO entirely since it can't bring in enough traffic to make sense.

You need to assess 2 conditions before jumping into the SEO game

Contidion 1

There must be a number of topics related to your business that have massive amount of search volume. This defines the amount of traffic you can bring in to your website through SEO. In order to find related topics you need to conduct keyword analysis. Check this guide to learn how to do it

Condition 2

Condition #2 is about competition. The amount of traffic achievable from the top spots is drastically higher relevant to the bottom spots so you need to rank your content as higher as possible. If your business is in a niche where everybody else is already investing millions of dollars in SEO and you can’t do the same most of the time you’re doomed. Most of SEO software (e.g. Ahrefs, SEMRush) has an indicator called Keyword Difficulty which shows how hard it is to rank your content for a particular keyword. There’s more about SEO competition in this article so keep reading.

Google ranking algorithm fundamentals

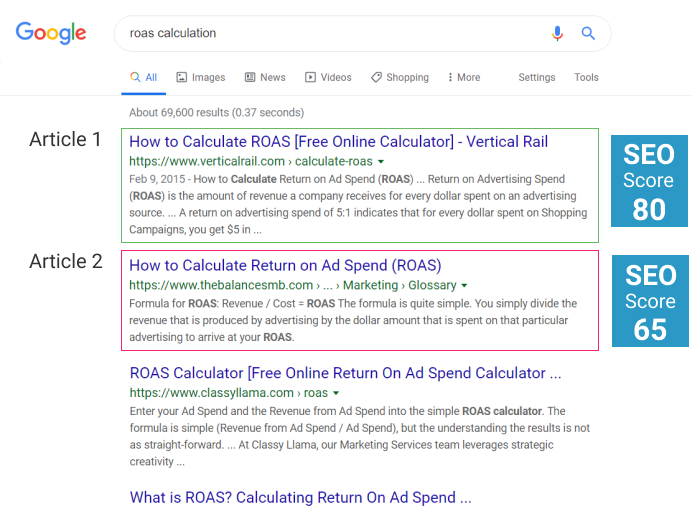

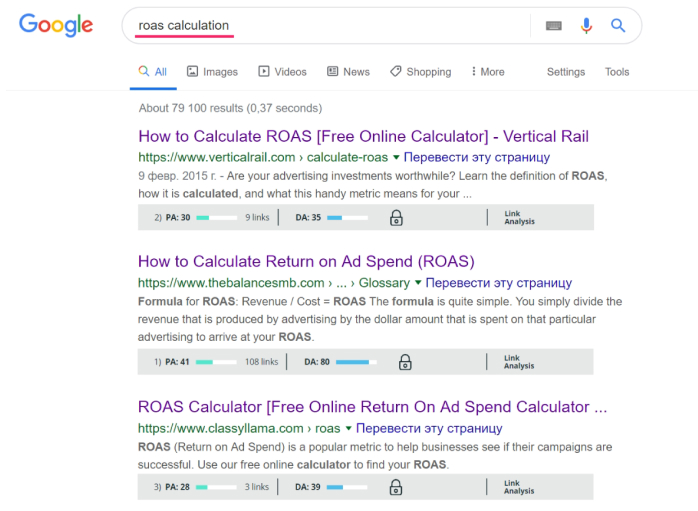

Let’s consider an example. This is the first page of the Google search results for the keyword “ROAS calculation”. Google decided that regarding this topic the Article 1 is the most relevant and useful so we can say that it has the highest SEO score. The Article 2 is a bit less relevant so it has lower SEO score, and so on.

But why exactly did Google decide that the Article 1 is better than the Article 2? Let’s try to reverse engineer ranking algorithm step by step.

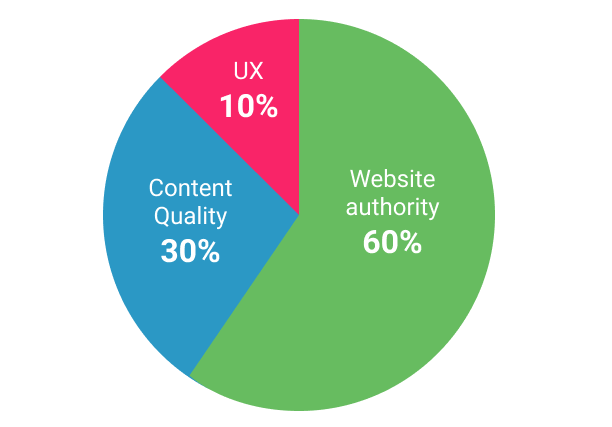

First, Google looked at the authority of the website these articles were posted to. You can think about website’s authority as its reputation as a thought leader and it depends on the amount and quality of external links from other websites. When one website links the other website there’s saying that it passes the “link juice”. The more authoritative website is, the more “link juice” it passes.

Next, Google vets content. Google perfectly understands the context and intent behind each search query. We can say that Google has a prototype of an “ideal” article answering that query. So when we post a new article, Google compares its content to the content of that “ideal” article. If they're similar our article gets higher SEO content quality score. Google also prefers if a website is dedicated to a single topic so an article on such website would be ranked higher compared to another article from a website which focuses on many different topics

Finally, Google pays high attention to the User Experience. How many seconds did it take to load your page? Is it mobile-friendly? Do people stop looking for additional information on the topic after they’ve seen your article? Better user experience gives you better SEO score.

Back to our example. Now we understand why Google sorted these articles in this order. It simply calculated SEO score for each based on the criterias we just discussed.

Although It’s hard to say about the exact influence of these 3 factors on the final SEO score, but based on my experience the website authority affects the final score by 60%, content quality by 30%, ux signals by 10%.

Links

Once Google finds out that a page on another website put a link to your article, it increases the SEO score of your article. The increase in SEO score might vary and depends upon the following factors:

Content relevance. That means that the page you’re getting the link from should be relevant to the topic of your article . If the topics are different, than the link is much less valuable.

Domain authority. For example, a link from NYTimes.com is much more powerful than a link from a local newspaper site. There’s a lot of indicators which attempt to measure the website authority. For example DA by MOZ or DR by AHREFS. All of them are relatively accurate so you can use either one. So getting a link from a website with DA 63 is much more valuable than getting a link from a website with DA 32.

Do-Follow links vs No-Follow links. Any link can be spoiled so it won't benefit the SEO score of the site it links to. The html code of a link can include a special parameter. By default, this parameter is set to “follow” which makes that link valuable for the SEO score. If the parameter is set to “no follow” that link is spoiled and almost useless. Obviously do-follow links are much more powerful relative to nofollow links

Internal links which are links to other pages of your own website are also very important. With internal links you can control the way the “link juice” you collected spreads across different pages of your website. So each page has to link the homepage. Relevant articles have to link each other.

Page content

Google gives you a hint about the favorable content each time you see the search results page. Articles that hold the top spots are there for a reason. Google decided that their content is remarkably good at answering a question behind a particular search query. That means if you write a similar article yet slightly improve it that’d be enough to outrank them.

For example, let’s reverse engineer the search results for a query ROAS calculation.

Now let’s check the second spot. This article is published on a website with DA 80. That is a huge difference. This is getting interesting. How is that possible that a website with somewhat mediocre authority could outrank a monster with DA 80? Let’s compare their content. The Spot #1 has only a few lines of copy and a useful calculator. The Spot #2 has a bit more text and that’s it. No calculator, no images, no videos, just text. Looks like the calculator factor made the difference here. Google decided that since the search query contains the word “calculation”, the article that has a calculator is better.

So if we’d want to outrank the Spot #1 in terms of content, what should we do?

- First, we’d need to write more copy.

- Second, we’d need to include a useful calculator

- Third we’d need to enhance the copy with a few images and a video

It's fair to say that if two pages published on two different sites with similar domain authority and one of them ranked higher, that page has better content.

To wrap up the topic of content, always make sure the HTML code of your article is properly optimized. If you know little about it, Google “onpage optimization”.

Speed and usability

A few notes about page speed and usability. By page speed I mean literally how fast your page loads. You won’t get rewarded for a fast website but you might get penalized if it’s slow. Your site also must work perfectly fine on mobile devices. Check your website speed index for both desktop and mobile in Google page speed insights. Make sure your speed index is at least 40 for both desktop and mobile. Page speed and usability also directly influence the UX signals which we’re about to cover next.

UX signals

Google strives to find out if the search results they provide actually satisfy users. It’s believed that they started tracking let’s call it “user satisfaction” a few years ago and that the ranking algorithm now uses these insights. Here’s how it's supposed to work.

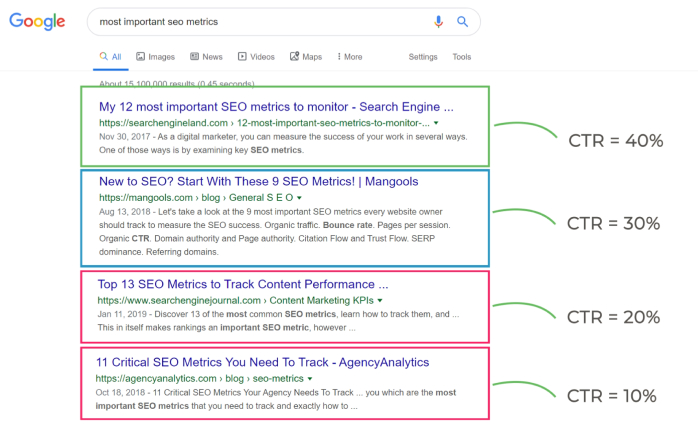

Let's take a look to a SERP page.

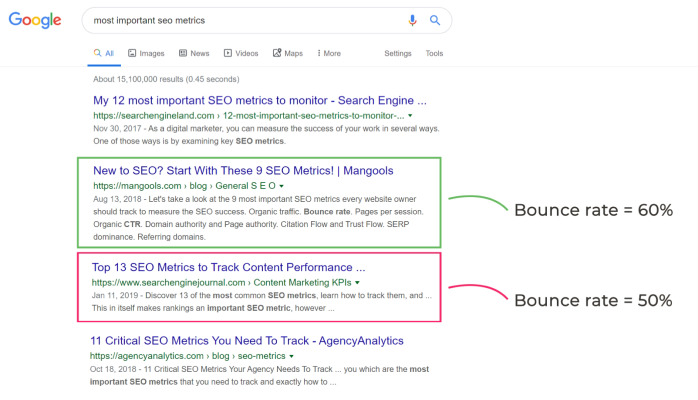

Another UX signal Google measures is the bounce rate. When someone clicks on a particular search result, Google measures how long they visit the page. If the user quickly got back to search results, it’s called a bounce and is a strong indicator that the page didn’t answers user’s question.

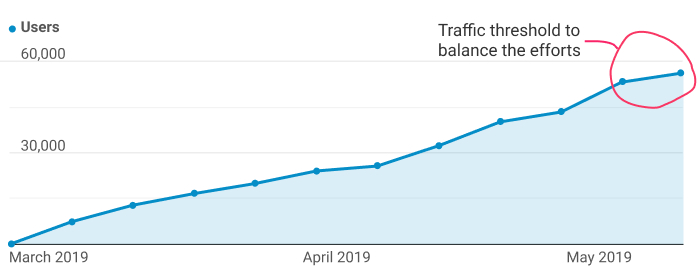

Yet another UX signal that might boost your ranking is the increase of page traffic from other channels. For example, you started to promote a particular article on Facebook ads so now more people visit that article on a daily basis then Google is likely to increase its ranking.

White-hat and black-hat SEO distinction

We already figured out that Google’s algorithm is very sophisticated and advanced. Yet, it’s still an algorithm so some people try to manipulate it. SEO techniques tailored to manipulating the Google’s algorithm are labeled Black-Hat SEO as opposed to the White-Hat SEO we discussed earlier.

Google punishes everyone who get caught using any Black-Hat SEO method so it’s important to understand their foundations to know what to avoid.

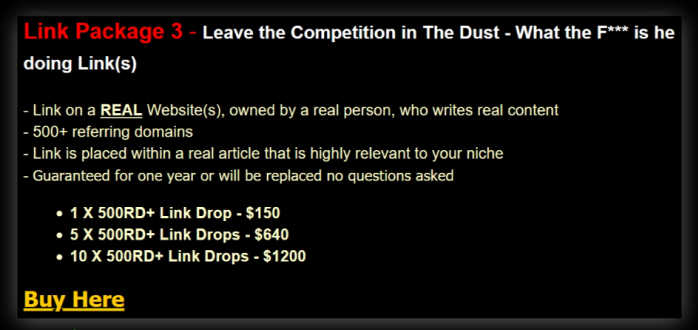

Black hat SEO method #1 - Buying backlinks.

It’s forbidden to pay other website owners for providing a backlink to your site. Instead, build your backlink profile with a variety of so called “outreach techniques” to acquire links naturally.

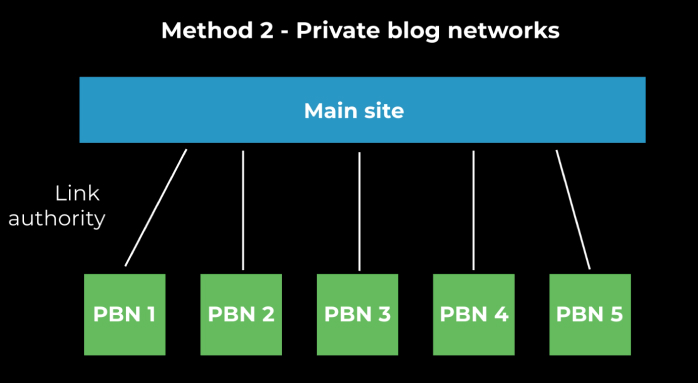

Black hat SEO Method #2 – Building a private blog network or a PBN.

A PBN is a network of artificially created websites only used to build links to your main site.

Black hat SEO Method #3 – Content Quality manipulation.

So how does Google vet content quality? Since they scraped the whole Internet they know that any particular topic is usually described through specific subtopics, sentences and terms. To collect these findings Google developed a bunch of semantic algorithms similar to LSI and TF-IDF. So it would be fair to say that Google has certain expectations that content which is superb at covering a given topic should include particular subtopics, sentences and terms. If your content comply with these expectations it’d be ranked higher.

For example, you hired a copywriter on Upwork for $8/hour to write you an article about the keto diet. Since this copywriter is not a nutrition expert the quality of that article would be quite bad. However, if the copyrighter had merged several LSI-keywords (example of great LSI tool ) into the copy this would artificially increase the articles quality in the eyes of Google.